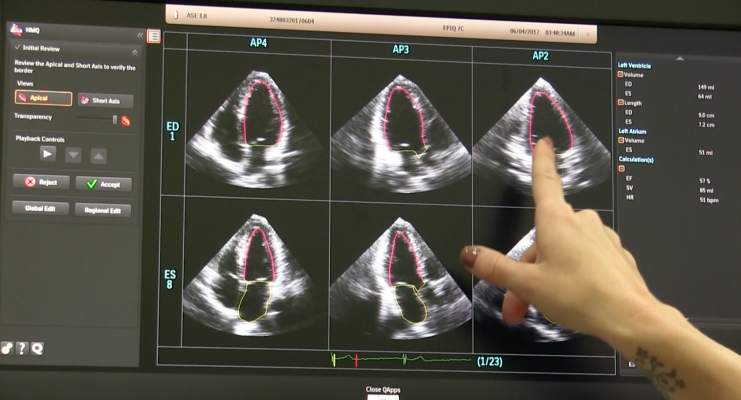

GE, Siemens and Philips are among the echocardiography vendors that incorporate deep learning algorithms into its echo software to help automatically extract standard imaging views from 3-D ultrasound datasets. This is an example of the Philips Epiq system, which uses the vendor's Anatomical Intelligence software to define the anatomical structures and automatically display standard diagnostic views of the anatomy without human intervention. This can greatly speed workflow and reduce inter-operator variability.

Medical image analysis based on artificial intelligence (AI) employ convolutional neural networks, support vector machines, fuzzy logic systems and other machine learning methods to extract meaning from medical images. State-of-the-art computer vision software gives evidence-based tips to diagnosticians, dispels their possible doubts and ensures diagnostic consistency.

Standard view location is a crucial step in echocardiography, as these frames contain essential diagnostic data. Automatic capturing of standard planes from an ultrasound examination can speed up scanning and make it more accurate. A close look at the research in this area will prove this is not a speculation. Computer-aided detection of standard views constantly supports clinicians.

How Computers See Images

Medical image analysis is a practical application of computer vision – a branch of computer science dealing with object and feature recognition in digital images, including digital video frames. Computer vision algorithms analyze images through a range of processes, similar to those performed by the human visual system. After initial preprocessing, which includes de-noising, filtering and feature enhancement, software breaks an image down into meaningful regions in a process called image segmentation. Then the algorithm extracts important features and classifies objects in the image based on these features. Besides, medical image analysis algorithms often perform image registration — mapping of two and more images of the same anatomical structures to detect any difference or change.

Based on machine learning, classification is the most sophisticated function of medical image analysis software. Every AI system uses machine-learning methods as its “brain.” These algorithms allow computers to remember loads of information and use it to analyze similar information after their learning has finished. That is why this approach is so widely used in computer vision — trained on image datasets (e.g., a dataset of ultrasound images), the software then recognizes familiar features in real-world images (e.g., in a real-time ultrasound scan) and makes relevant conclusions based on them.

The accuracy of such systems rises with the number of data fed into them. Starting with hundreds of images, they show decent results, and after processing thousands of images and more, their accuracy approaches 100 percent. Of course, it also depends on the architecture used, and with the development of machine learning methods, algorithms used for medical image analysis show ever better results.

Applying Computer Vision to Echocardiography

Cardiac echo has a number of challenges that medical image analysis can solve. For example, researchers suggest the use of computer vision for automatic segmentation of anatomical structures, detection and classification of congenital heart defects, real-time catheter location, etc. Standard view acquisition, the most basic task in cardiac ultrasound, also can be accomplished by medical image analysis.

Standard View Acquisition

To locate standard heart views, software should select appropriate 2-D planes from a multitude of frames taken during an ultrasound scanning. Here, different challenges arise, such as analyzing 2-D frames, 3-D volumes, 2-D temporal sequences, or 4-D spatio-temporal image correlation (STIC) volumes.

The latter issue has been addressed by an international collaboration, suggesting the use of a scale-invariant feature transform (SIFT), a state-of-the-art feature detection algorithm and a support vector machine (SVM), a supervised machine-learning method. This approach has been tested on a dataset containing both normal and abnormal cases. The software was to locate three standard planes: four-chamber section (A4C), three-vessel view (TVV) and transverse abdominal section (TAS).

The method has shown great results on synthetic data, that is, it has detected standard heart views among randomly selected planes with an accuracy of 87-100 percent. However, on real volume data, the performance was moderate, with an accuracy of 33-53 percent. Still, on both datasets the method has shown greater results than previous approaches. In the future, the researchers plan to improve accuracy by applying a deep convolutional neural network (CNN), which is the most promising machine learning method used for image analysis. Another collaboration suggested a similar approach: they have applied a fused deep learning framework based on two CNNs to locate eight standard heart views in 3-D echo, and achieved the accuracy of 92.1 percent. When locating only three primary planes, the accuracy was as high as 98 percent.

It is worth noting that both studies used a relatively small amount of data to train their systems: they fed their SVM and CNN systems with data corresponding to several hundred ultrasound plane images. This might be enough to test a system’s performance, but after serious training on big datasets, machine learning software shows even better results.

State-Of-The-Art and Expectations

Today, CNNs are considered the most powerful classification technique in machine learning. Specifically designed to analyze images, they show spectacular image classification accuracy. In some tasks, they have already outperformed humans, as an annual ImageNet visual recognition challenge has shown. The winning ImageNet research team had millions of labeled images to train their convolutional neural network with. So, with the amount of medical image data growing, we can expect that medical image analysis software will soon become an essential part of ultrasound systems.

Related AI Content

Read the article “How Artificial Intelligence Will Change Medical Imaging.”

Watch the VIDEO "Expanding Role for Artificial Intelligence in Medical Imaging," from HIMSS 2017

May 04, 2026

May 04, 2026